- Causal inference is the discipline of proving that a marketing action caused a business outcome — not merely correlated with it — making it the foundation of credible lead attribution.

- Standard attribution models (last-touch, linear, data-driven) measure credit allocation, not causation; causal inference answers the harder question: what would have happened without this channel?

- Methods like Difference-in-Differences, geo-experiments, and holdout testing allow revenue marketing teams to isolate true channel incrementality and make defensible budget decisions.

What Is Causal Inference?

Causal inference is a statistical framework for determining whether a specific intervention — a marketing campaign, channel activation, or touchpoint — directly produced a measurable outcome, independent of confounding variables.

Where correlation tells you two metrics move together, causal inference tells you which one is driving the other.

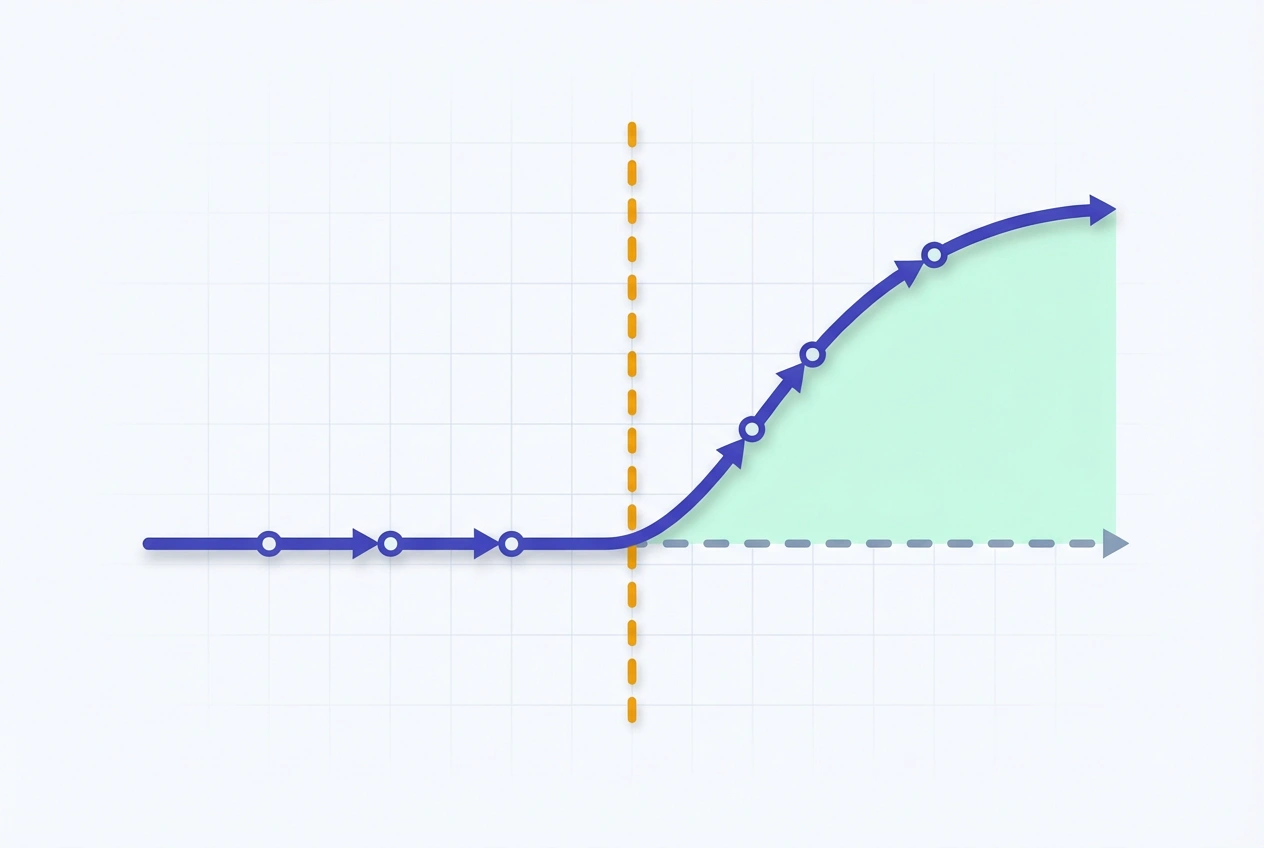

In marketing measurement, the core question is the counterfactual: what conversion volume, MQL rate, or pipeline value would have existed had the marketing activity never run? The gap between observed reality and that counterfactual baseline is the true causal effect.

This matters at the executive level because every attribution model — from last-touch to algorithmic — is fundamentally an approximation. Causal inference replaces that approximation with a statistically defensible answer.

Test LeadSources today. Enter your email below and receive a lead source report showing all the lead source data we track—exactly what you’d see for every lead tracked in your LeadSources account.

Why It Matters for Lead Attribution

Most attribution platforms answer “which channels were present before conversion?” Causal inference answers “which channels actually drove conversion?”

The distinction is not academic. A brand running simultaneous paid search, paid social, and content programs will see every channel claim conversion credit under standard multi-touch models. That overlap inflates reported ROAS across the board and makes channel-level budget decisions unreliable.

According to Forrester, 72% of B2B marketing organizations report low confidence in their attribution data when making budget allocation decisions. The gap is structural: attribution models built on observed touchpoints cannot control for selection bias, seasonality, or the baseline demand that would have converted organically.

Causal inference closes that gap. By isolating the incremental lift each channel produces — the conversions that would not have happened without it — it transforms attribution from a credit-allocation exercise into a genuine ROI measurement framework.

For lead generation specifically, this means identifying which channels are producing net-new MQLs versus cannibalizing organic or direct traffic that was already converting.

How Causal Inference Works

The theoretical foundation is the Potential Outcomes Framework (Rubin, 1974), which defines causality through two states for every unit: the outcome when exposed to treatment (Y₁) and the outcome when not exposed (Y₀).

The causal effect for any individual unit is Y₁ − Y₀. The practical challenge is that both states can never be observed simultaneously — you either ran the campaign or you didn’t.

The solution is to construct a credible control group: a comparable population that did not receive the treatment, against which the treated group’s outcome can be measured.

Average Treatment Effect (ATE):

ATE = E[Y(1) − Y(0)]

= Mean outcome (treated group) − Mean outcome (control group)The validity of any causal estimate rests on one assumption: that the control group represents what the treated group’s outcome would have been in the absence of treatment. Violations of this assumption — through selection bias, spillover effects, or temporal confounds — invalidate the causal estimate entirely.

Key Methods and Frameworks

No single method dominates marketing causal analysis. Method selection depends on data availability, experimental control, and the specific causal question being answered.

Randomized Controlled Trials (RCTs)

The gold standard. Users are randomly assigned to treatment (exposed to a campaign) or control (withheld from it), with randomization eliminating selection bias by design.

In digital marketing, RCTs manifest as holdout tests — typically a 10–20% traffic or audience holdout that does not see a given campaign during the measurement window. The conversion delta between exposed and holdout groups is the pure causal effect.

Difference-in-Differences (DiD)

Used when full randomization is impractical. DiD compares the change in outcomes in a treated group before and after an intervention against the same change in a control group over the same period.

DiD = (Y_treated_post − Y_treated_pre) − (Y_control_post − Y_control_pre)In lead attribution, DiD is commonly applied in geo-experiments: activating a channel in specific markets while holding others dark, then comparing MQL or pipeline deltas across geographies.

Instrumental Variables (IV)

Applied when the treatment itself is confounded — for example, when higher-intent leads are more likely to receive retargeting, making retargeting appear more effective than it is. An instrument is a variable that affects exposure to treatment but has no direct effect on the outcome, allowing the causal pathway to be isolated.

Regression Discontinuity Design (RDD)

Exploits sharp eligibility thresholds to estimate causal effects. In marketing, a practical application is budget pacing: leads exposed to campaigns just before a daily budget cap compare against those who would have been exposed just after, with proximity to the threshold serving as a natural control.

Building Causal Inference Into Your Marketing Stack

Operationalizing causal inference in a revenue marketing team requires four sequential steps.

- Establish counterfactual baselines before activating channels.

Pre-campaign baseline periods and holdout group definitions must be locked before the experiment begins. Retroactive counterfactual construction introduces significant estimation error. Your lead attribution platform should capture source-level data at the lead level to support pre/post comparison with sufficient granularity. - Select the experimental design that matches your data constraints.

For teams with sufficient traffic volume (>5,000 monthly leads), user-level holdout RCTs produce the cleanest estimates. For mid-market teams, geo-based DiD experiments using regional holdout markets are a practical alternative. Match the design to your sample size — not your preference. - Instrument your CRM with lead-level source data throughout the funnel.

Causal estimates are only as precise as your outcome measurement. If your CRM attribution stops at MQL and doesn’t track through SQL, opportunity, and closed-won, you risk optimizing for conversion volume rather than revenue causality. Lead source data must persist from first touch through deal close to support full-funnel causal analysis. - Run incrementality tests at the channel level, not the campaign level.

Campaign-level holdouts answer tactical questions. Channel-level holdouts — withholding an entire channel for a defined period — answer strategic ones: does paid social incrementally drive pipeline, or is that pipeline converting organically regardless? The latter question is what CMO-level budget decisions require.

| Method | Best For | Data Requirement | Execution Complexity |

|---|---|---|---|

| RCT / Holdout Test | Channel incrementality, creative testing | High volume (>5K leads/mo) | Medium |

| Difference-in-Differences | Geo-experiments, budget activation | Comparable market pairs | Medium–High |

| Instrumental Variables | Confounded retargeting, intent bias | Valid instrument required | High |

| Regression Discontinuity | Budget pacing, threshold effects | Threshold data required | High |

| Synthetic Control | Market-level channel launches | Long pre-period time series | Very High |

Common Pitfalls to Avoid

Causal inference in marketing fails at predictable points. Understanding them prevents costly misattribution.

- SUTVA violations: The Stable Unit Treatment Value Assumption requires that one unit’s treatment does not affect another’s outcome. In digital advertising, audience overlap between holdout and treatment groups — caused by lookalike targeting or shared cookie pools — contaminates the control and inflates the measured causal effect.

- Novelty effects: New channels or creative treatments consistently outperform in the first measurement window, not because of genuine causal lift but because of user curiosity. Causal estimates from experiments shorter than one full business cycle overstate incrementality.

- Confounding the brand effect: In high-brand-equity categories, a significant share of conversions attributed to paid channels are actually brand-demand conversions that would have occurred through direct or organic search. Failing to isolate branded from non-branded traffic in holdout designs overstates paid channel causality.

- Underpowered experiments: Causal estimates from holdout tests with insufficient sample size produce wide confidence intervals that cannot distinguish real lift from noise. Before running any incrementality test, calculate the minimum detectable effect (MDE) against your available sample — a 10% holdout on a 500-lead-per-month funnel will not generate statistically valid causal estimates.

- Single-period generalization: Causal effects measured during Q4 peak demand periods are not valid proxies for full-year channel performance. Seasonal confounds require either year-over-year DiD designs or rolling holdout windows that span multiple demand cycles.

Frequently Asked Questions

What is the difference between causal inference and marketing attribution?

Attribution models distribute conversion credit across observed touchpoints using predefined rules or algorithms. Causal inference determines whether those touchpoints actually produced the conversion — a fundamentally different question. Attribution answers “who was present?” Causal inference answers “who was responsible?” A channel can receive high attribution credit while having low or zero causal impact if the conversions it touches would have happened anyway through other means.

Can causal inference be applied to small lead generation programs?

Yes, but method selection must match sample size. User-level RCTs require high lead volume to reach statistical significance; teams generating fewer than 2,000 leads per month are better served by geo-based DiD experiments or synthetic control methods that exploit time-series variation rather than cross-sectional holdouts. The critical constraint is achieving sufficient power to detect the minimum effect size that would be actionable for budget decisions.

How does causal inference relate to Marketing Mix Modeling (MMM)?

MMM is a macro-level causal modeling approach that uses historical spend and outcome data to estimate the contribution of each channel to aggregate revenue. Unlike holdout experiments, MMM does not require active experimental design — it infers causality from observational data using regression techniques with diminishing returns curves. The tradeoff: MMM operates at aggregate level and cannot produce lead-level or account-level causal estimates, making it complementary to — not a replacement for — experimental causal methods.

What lead attribution data is required to run causal inference experiments?

At minimum, you need lead-level source data (channel, campaign, and touchpoint), a reliable conversion timestamp, and a mechanism for constructing or identifying a control group. For full-funnel causal analysis that measures incrementality through SQL and closed-won, the lead source data must persist in your CRM through every pipeline stage. Attribution platforms that capture source data at the form submission level and pass it to CRM contact records enable this end-to-end traceability.

How long should a causal inference holdout experiment run?

Minimum duration is determined by three factors: the time required to accumulate sufficient sample size in both treatment and control groups, the length of your typical sales cycle (to observe downstream pipeline effects), and the need to span at least one full business-cycle period to avoid seasonal confounds. For B2B SaaS with 30–90 day sales cycles, experiments shorter than 6 weeks rarely produce actionable causal estimates. High-ticket enterprise programs should plan for 90-day minimum windows.

Is causal inference only useful for digital channels?

No. Causal inference methods apply to any channel where a control group can be constructed — including direct mail (holdout zip codes), events (matched non-attendee cohorts), and outbound SDR programs (randomized account assignment). The experimental design adapts to the channel mechanism; the underlying causal logic remains identical. For offline-to-online lead generation, geo-based DiD designs are particularly effective at isolating the incremental MQL contribution of offline channel activation.

How do I know if my causal estimates are valid?

Validity rests on three testable conditions: (1) parallel trends — treatment and control groups moved in sync before the intervention; (2) no spillover — treatment group exposure did not contaminate the control group; and (3) balance — pre-experiment characteristics of both groups are statistically similar. Failing any of these conditions requires either a redesigned experiment or a methodological adjustment such as propensity score matching to rebalance confounded groups before computing the causal estimate.